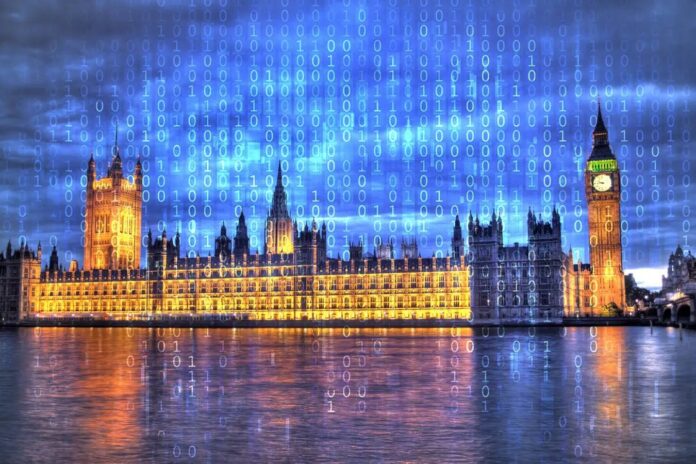

The Information Commissioner’s Office (ICO), the UK’s data-protection authority, has issued new guidance confirming that artificial intelligence interactions by government staff are subject to Freedom of Information (FOI) laws. This ruling clarifies that both the prompts entered by officials and the resulting text, images, or data generated by AI tools must be disclosed if requested by the public.

This development significantly strengthens transparency measures, potentially allowing journalists and citizens to access records of ministers using platforms like ChatGPT for official duties.

Clarifying the Legal Landscape

For some time, public authorities have faced ambiguity regarding how FOI applies to emerging technologies. The ICO’s new guidance removes this gray area by stating explicitly:

“If staff at a public authority use AI for work purposes, the information generated will be subject to FOIA along with the prompts used.”

Legal experts suggest this clarification will close loopholes previously exploited by government bodies. Jon Baines of the law firm Mishcon de Reya notes that it will now be “very difficult for public authorities to claim that AI-related requests are not subject to FOIA.”

The logic is straightforward: if information is recorded by a public servant in the course of their duties, it falls under FOI, regardless of whether it was written on a Post-it note or generated by a large language model. Tim Turner, a data-protection expert, argues this should be “uncontroversial,” emphasizing that the medium of record-keeping does not exempt content from transparency laws.

Impact on Transparency and Accessibility

This guidance could reshape how government transparency operates in the digital age. Two key implications stand out:

- Access to Decision-Making Processes : Citizens may now request the specific prompts used by officials, offering insight into how AI is influencing policy advice or administrative decisions.

- Overcoming Cost Barriers : The ICO suggests that public bodies could potentially use AI to summarize large datasets when responding to FOI requests. This could allow authorities to fulfill requests that were previously rejected on grounds of excessive cost, thereby increasing the volume of accessible information.

Precedent and Controversy

The ruling follows a landmark case last year, when New Scientist successfully obtained the ChatGPT logs of former UK Tech Secretary Peter Kyle. This is believed to be the first time such a request was granted globally. However, subsequent attempts by other outlets to access similar data often faced rejection, with authorities labeling requests as “vexatious” or citing high processing costs.

The new guidance aims to prevent such blanket refusals. However, the move has sparked debate regarding privacy and practicality. Matt Clifford, chair of the UK’s Advanced Research and Invention Agency (ARIA), criticized the precedent set by the Kyle case, calling it “absurd” and “hugely corrosive.” He warned that such scrutiny could deter ministers from using AI tools altogether, stifling innovation in government operations. Notably, ARIA itself is exempt from FOI laws, highlighting the uneven application of transparency rules across different public bodies.

Why This Matters

The intersection of AI and governance raises critical questions about accountability and trust. As governments increasingly rely on AI for drafting communications, analyzing data, and formulating policy, understanding how these tools are used becomes essential for democratic oversight.

If officials can use AI to generate official outputs without public scrutiny, it creates a “black box” in governance. By confirming that AI logs are subject to FOI, the ICO ensures that the integration of artificial intelligence into public service remains transparent. This sets a vital precedent for other nations grappling with how to regulate AI in the public sector.

Conclusion

The ICO’s guidance establishes a clear boundary: AI usage in government is not a private sphere. While concerns about chilling effects on innovation persist, the ruling prioritizes public right to know, ensuring that the rapid adoption of AI does not outpace democratic accountability.